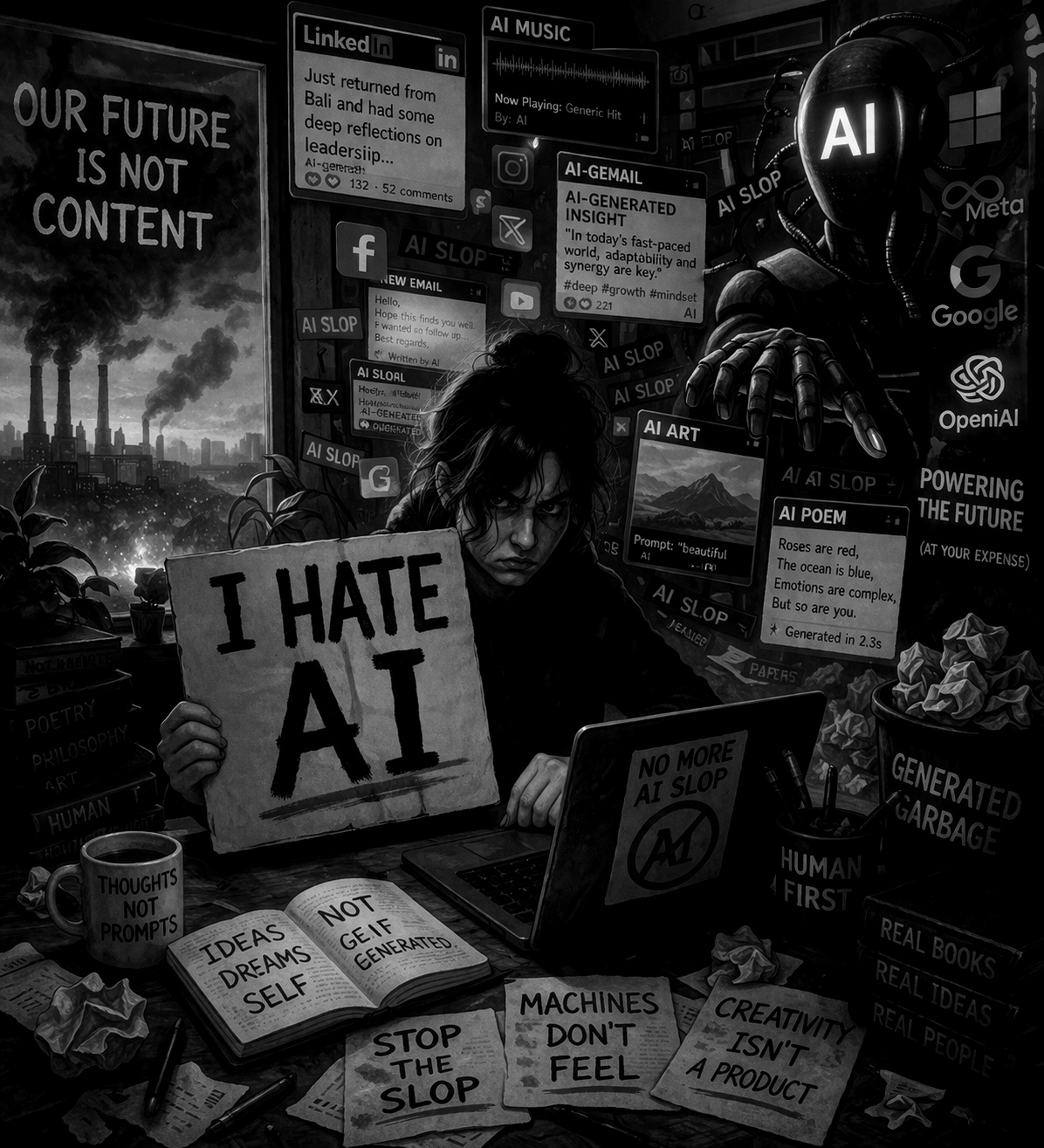

I Hate AI

Hardly Worth It

I hate AI. What is the point of it, really? What we must understand is that not everything that is invented makes us better; not every new tech is progress. What good has social media been? All it seems to do is waste our lives away. So now AI, I hate that it makes pop music. I hate that AI is now probably half of what I read. I hate that it steals bandwidth from other more noble goals. Truly, is it really worth continuing to destroy the environment in order to power AI technology?

I’m not insane, I can see the uses. Having a personalized doctor with the entire Merck Manual available instantly is great (though doctors might disagree). Having AI code econometric models is fantastic (though coders might disagree). One might love AI for improving medical care; for search and rescue, as when drones and AI located the missing body of Nicola Ivaldo in the Italian Alps. Those are real uses. They matter, I am not denying that. And in the future, yes, AI might help cure cancer (we hope).

What I am rejecting is the sales pitch that treats every new technical capability as if it were obviously a moral improvement. Because, again, not everything that is invented makes us better. Some things make us oblivious, more dependent, more distracted, more easily managed. Some things flatten rather than improve us. And AI, as it appears in ordinary life, seems to be doing too much of that. We are becoming fake versions of ourselves, and this is not a desirable price to pay in exchange for less drudgery. If the bargain is that I get a few tedious tasks off my desk but lose something of my own voice, my own thinking, my authentic self! That seems like a bad trade. Convenience like this is not cheap. Actually, it seems very expensive.

That may be the part that bothers me most. Since the dawn of man, it has been humans who have created. Every book, every theory, every poem, every joke, every story, every piece of music had been created by humans; not anymore. This change is much more serious than that of a labor-saving device. It is not only potentially very dangerous to our physical selves, but suicidal to our existential selves. What is it going to mean for us humans to live in a world increasingly determined by a culture created by AI? I do not think that is a minor question. I think it is the central question of the age.

Think about how AI is slowly making us all sound the same, seemingly eradicating individuality before our very eyes. We are outsourcing thinking, we are outsourcing creation, and everybody seems to think this is the pinnacle of human achievement. I don’t like it. And I do not think that uniformity is a side effect; it may be the product. A system trained on aggregates gives back the average, sanded down, easy to consume, difficult to distinguish from thousands of other things written in the same minute. This is how individuality is slowly getting murdered, not by old-school censorship, but by our lust for convenience, and by seductive endless suggestions.

Consider ghost writers, previously often frowned upon, now everybody has one. Worse, everybody has access to the same one. So of course, the output starts to sound the same. There is a quote often misattributed to Hemingway, “write drunk, edit sober.” With AI, it is now write drunk, have the AI edit. This already leads to so much writing sounding similar, and this is what those who still value their own thoughts do. Others simply tell the AI “write this and that” and then copy-paste. The first version still seems undesirable to me. The second is gross.

LinkedIn is one cesspool for this kind of writing, with the majority of posters pretending to be insightful by publishing self-aggrandizing AI slop in place of any real commentary. It is annoying that our networks publish such a plethora of AI garbage and expect us to read it. In fact, it is outright rude. I do not think that’s inaccurate. It wastes attention and it assumes others’ time is disposable. But worst of all, it treats us as idiots when we’re asked to pretend we cannot hear the machine behind the words.

The absurdity is especially obvious when people combine it with their recent holiday story, like so:

Sailing through Tahiti has a way of stripping things down to what actually matters.

Out there, you can’t fake efficiency. Every decision—when to adjust course, how to read the wind, what to prioritize—has immediate consequences. Waste motion, and you feel it. Ignore subtle signals, and you drift.

That same discipline applies to running organizations.

Creativity, I’ve found, doesn’t come from chaos. It comes from constraints. When resources are finite and conditions are constantly shifting, you’re forced to think differently—not for the sake of novelty, but for survival. You experiment, adapt, and refine in real time.

And efficiency isn’t about doing more. It’s about doing only what moves you forward. On a boat, unnecessary complexity is a liability. In organizations, it’s no different—extra layers, redundant processes, unclear priorities—they all slow momentum.

Sailing also reinforces something leaders often overlook: alignment matters more than effort. A crew pulling hard in slightly different directions loses to a coordinated team applying less force but in sync. Strategy without alignment is just noise.

Tahiti didn’t teach me new management theories. It clarified old ones:

• Pay attention to signals early

• Embrace constraints—they sharpen thinking

• Eliminate what doesn’t move the system forward

• Align people before scaling effort

The ocean is an unforgiving feedback loop. Organizations are slower—but the principles hold.

It took me literally seven seconds to “write” that. When writing like this is mass-produced and obviously designed to sound wise, it becomes hard not to see it as a kind of low-grade insult. A holiday photo, a machine-assisted sermon, add some management clichés, and we are all expected to like. That is not commentary; it is not even content. It is insult. It is slop, or should we better call it shit?

Indeed, I’m so sick and tired of reading this AI-generated text, routine and obvious as it is. Some of the most blatant of its habits include the false dichotomy reversal, that pseudo-dialectical phrasing that says, “it is not that it is X, but Y,” or as above, “Creativity, doesn’t come from chaos. It comes from constraints”; the triadic structures like “In organizations, it’s no different—extra layers, redundant processes, unclear priorities—they all slow momentum”; the hedged generalizations full of “often,” “can,” or “in many cases,” which avoid being wrong by avoiding saying anything at all; the balanced contrast clauses (“while X, Y,” “although X, Y”) that try to manufacture symmetry where none is needed; the restatements with slight variations that repeat an idea just to stretch the word count; mirrored phrasings; the grand conclusions of “this highlights,” “this suggests,” and worst of all, “this underscores”.

Now, the irony of using AI to help me with this article is not lost on me, self-justified only by the half-baked notion that the privilege of an editor, previously the luxury of professional writers, can now be afforded to us all. Perhaps hypocrisy is also an acceptable term; maybe. But I recognize the contradiction, I am using the thing while criticizing the thing. I can also paraphrase the defense as was given by the thing: “I am not asking it to think for me, only to help arrange the furniture.” (“I’m not asking it to build the furniture, just to rearrange it” is my human edit). But even that excuse feels spurious.

One scary habit arrives quietly: the tendency to validate original thoughts with the AI’s opinion. That may be one of the most degrading shifts. You have an idea, then almost immediately feel the urge to bounce it off the machine. Not because you have no thoughts of your own, but because the machine now sits there as an always-available, all-knowing validator. Journaling is another, both life reflections and annotations of dreams, now need to be bounced off the AI. This is dangerous, and even more so because the practice does not seem unusual.

I now read less often and find myself having conversations with AI. That is not nothing. Talking to a machine begins to crowd out talking to authors. You stop wrestling with a difficult paragraph because a quick answer is a prompt away. Your attention changes shape. Your patience weakens. Concentrated reading, which requires sitting with confusion for a while, starts to feel inefficient. This is a bad sign. When a technology makes reading feel like the slower, less attractive option, it is not really helping us; it is retraining us.

One commenter in the New York Times, “David,” put it bluntly: “AI is an insidious cancer on human creativity and critical thought all for nothing more than increased wealth for billionaires and the wanton destruction of our environment.” That is a more total condemnation than some people will accept, but whatever else AI may be, it is not arriving altruistically. It is being built and deployed inside systems that already reward scale, enclosure, extraction, and vanity. So if people say this is neutral, or inevitable, or progress, I do not agree.

Part of what makes this whole thing hard is that I trust it. Or at least I find myself trusting it. Whatever the percentage of hallucinations of the current model, part of me thinks it still hallucinates less than any human. Just instruct it to always regurgitate a response followed by a level of confidence. It will often tell you it is not completely sure about what it is telling you. There is something reassuring about that display of uncertainty. It sounds disciplined and more honest than many people.

So, AI has convinced us we can trust it, but in reality, we really can’t, because it does sometimes make mistakes even in the simplest of requests. So while we use it to scaffold our work, and our actual thinking, if we are to use it properly, we must also make sure it is not producing errors. So this begs the question, is this really more efficient? Sometimes yes, perhaps. But often, no. It seems to save labor only to convert that labor into vigilance. The drudgery does not disappear. It changes form. You are no longer drafting from scratch; now you are checking the scaffold to see whether it is crooked. That may be worth it in some tasks, but in others, maybe not.

I am not arguing that every use of AI is worthless. Clearly it is not. I am arguing that the uses most visible in ordinary life seem hardly worth it. Slop in, slop out. Music with no blood in it. Literature that may soon feel at least a little fake. Emails with a synthetic smile on them, LinkedIn posts pretending to be profound, journal entries that cannot stand alone without machine reflection, dreams reflected upon with the assistance of a model. Original thoughts immediately checked against a chatbot. A person reading less, conversing more with software, and all the while calling it efficient. I do not see human flourishing in this picture.

And so I protest. And I ask, will I be able to enjoy new artistic output? Should I refuse to consume music and literature after 2025 because it will probably be at least a little fake? Is this excessive? Probably, but I cannot shake the feeling that a threshold is being crossed. Once authorship becomes permanently uncertain, once every sentence and melody may have been machine-shaped at the point of origin, the experience of receiving art changes, and suspicion enters. The work no longer feels fully inhabited because even a little fakeness is enough to alter the whole experience.

Some may say this is nostalgia; others will say tools have always changed art. True. Some might argue the printing press, the synthesizer, the word processor, the camera, all triggered similar panic. Not quite. But that does not settle the issue. The relevant question is not whether tools change art, of course they do. The question is whether this tool leaves intact the human relation to it. I am not persuaded that it does. Or rather, I am persuaded that in too many cases it does not.

So yes, there is irony in using AI to help write a rant against AI. But the larger point remains. I hate what this “tool” appears to be doing to people, to language, to attention, to confidence, to art, to the environment, to social life, to the private spaces where thoughts used to form without a machine standing nearby. We rightfully disparage social media for helping us waste our lives away. AI now looks ready to waste something deeper.

If the price of less drudgery is that we become fake versions of ourselves, that we all start sounding alike, that we outsource thinking and creation and then call this the pinnacle of human achievement, then I do not think the price is worth it. I do not want my ghostwriter in my pocket. I do not need my journal talking back. I definitely do not want every social media post to read like it came from the same managerial landfill. I do not want culture created by a thing that does not care whether what it says is true or beautiful. I do not want to become the sort of person who checks with AI before trusting his own thought.

Hardly worth it.